Let’s be honest: OpenClaw changed everything. When it first shipped, the idea of a single AI assistant that lives across all your messaging channels felt like science fiction. Today it’s table stakes. But as more teams move from “let’s try this” to “let’s deploy this for real,” the cracks in the original are showing — and a new generation of claw-like agents is stepping in to fill the gaps.

We’ve been building in this space for a while now, and we talk to enterprise teams every week who are evaluating their options. Here’s what the landscape actually looks like in 2026, and why we think it matters.

The Original: OpenClaw

You can’t have this conversation without starting here. OpenClaw is the most mature project in the space — 23+ channel adapters, a skills marketplace (ClawHub), voice wake mode, browser control, cron scheduling, Canvas with A2UI. It’s the kitchen sink, and for many teams, that’s exactly what they want.

But enterprise teams keep running into the same friction points. The ~500 MB memory footprint and 6-second startup feel heavy when you’re deploying hundreds of instances. The security model is application-level — permission checks in code, not actual OS isolation. And at ~400 source files with 53 configuration surfaces, onboarding new engineers takes longer than anyone admits.

If your team has the ops muscle and wants maximum channel coverage out of the box, OpenClaw is still a defensible choice. But if you’re evaluating with fresh eyes, keep reading.

NanoClaw: The Minimalist Thesis

NanoClaw took the opposite bet: what if the entire codebase was small enough that one engineer could understand it in an afternoon? It’s a single Node.js process, a handful of files, and true container isolation — not permission checks, but actual Docker or Apple Container boundaries per group.

The Agent Swarms feature is genuinely novel. NanoClaw was the first personal assistant framework to ship it, and it unlocks parallel task execution patterns that larger tools are still catching up on. The trade-off is ecosystem breadth — fewer channels, fewer integrations, and customization means changing code, not toggling config flags. For teams that want deep control and can live with five solid channels instead of twenty-three, NanoClaw punches well above its weight.

NullClaw: The Edge Play

This one is wild. NullClaw compiles to a 678 KB static Zig binary, runs in under 1 MB of RAM, and cold-starts in less than 2 milliseconds. On paper, those numbers shouldn’t be possible for something that supports 50+ LLM providers and 19 channels.

The architecture is vtable-driven — every component (providers, channels, tools, memory) is a swappable interface. You can compile exactly the feature set you need. For edge deployments, embedded devices, or that $5 ARM board sitting in a closet, nothing else comes close. The catch is Zig itself: smaller talent pool, steeper onboarding, and the project is still pre-1.0. Enterprise teams running fleets of lightweight agents on constrained hardware should absolutely evaluate this. Everyone else can admire it from a distance.

OpenFang: The Security-First Heavyweight

If NullClaw is the lightweight champion, OpenFang is the enterprise heavyweight. Written in Rust across 14 crates and 137K lines of code, it positions itself as an “Agent Operating System” — and the framing is earned. Sixteen distinct security layers including Merkle audit trails, taint tracking, Ed25519 signing, and SSRF protection. WASM-metered sandboxing for tool execution. Forty channel adapters.

The killer feature is Hands — pre-built autonomous capability packages for lead generation, OSINT collection, video processing, research, and social media management. These run 24/7 without user prompting, which is exactly what enterprise operations teams want. Cold start under 200 ms, 40 MB memory, 32 MB binary. The downside: it’s Rust, it’s complex, and it’s v0.3.30. But for organizations where audit trail and security posture are non-negotiable, OpenFang is the answer today.

CoPaw: The APAC Bridge

CoPaw comes from AgentScope and targets a gap that Western-built tools consistently miss: first-class support for DingTalk, Feishu, and QQ alongside the usual Discord/Slack/Telegram stack. Desktop installers for Windows and macOS, a web console for configuration, and Python-based skill authoring make it the most accessible option for non-developer users.

For enterprises with significant APAC operations — especially teams in China — CoPaw solves a localization problem that no other tool in this list even attempts seriously. The trade-off is the usual Python story: heavier runtime, less security hardening, more cloud dependency.

Hermes Agent: The Learning Loop

Nous Research’s Hermes Agent is the only project here that genuinely improves itself during use. It creates skills from experience, refines them over time, searches past conversations, and builds persistent user profiles. The closed learning loop — where the agent curates its own memory with periodic nudges — is architecturally distinct from everything else in this space.

For research teams and organizations betting on long-horizon agent deployments where accumulated knowledge is the moat, Hermes is uniquely compelling. It’s also the most research-oriented tool here, with built-in support for trajectory generation and RL environments. Less polished for day-one enterprise deployment, but the trajectory (pun intended) is clear.

HybridClaw: Where We Landed

We built HybridClaw because we kept seeing the same gap. Enterprise teams wanted OpenClaw’s feature depth but with actual security isolation, EU-stack compatibility, and GDPR-aligned data handling — without the ops burden of running Rust or Zig in production.

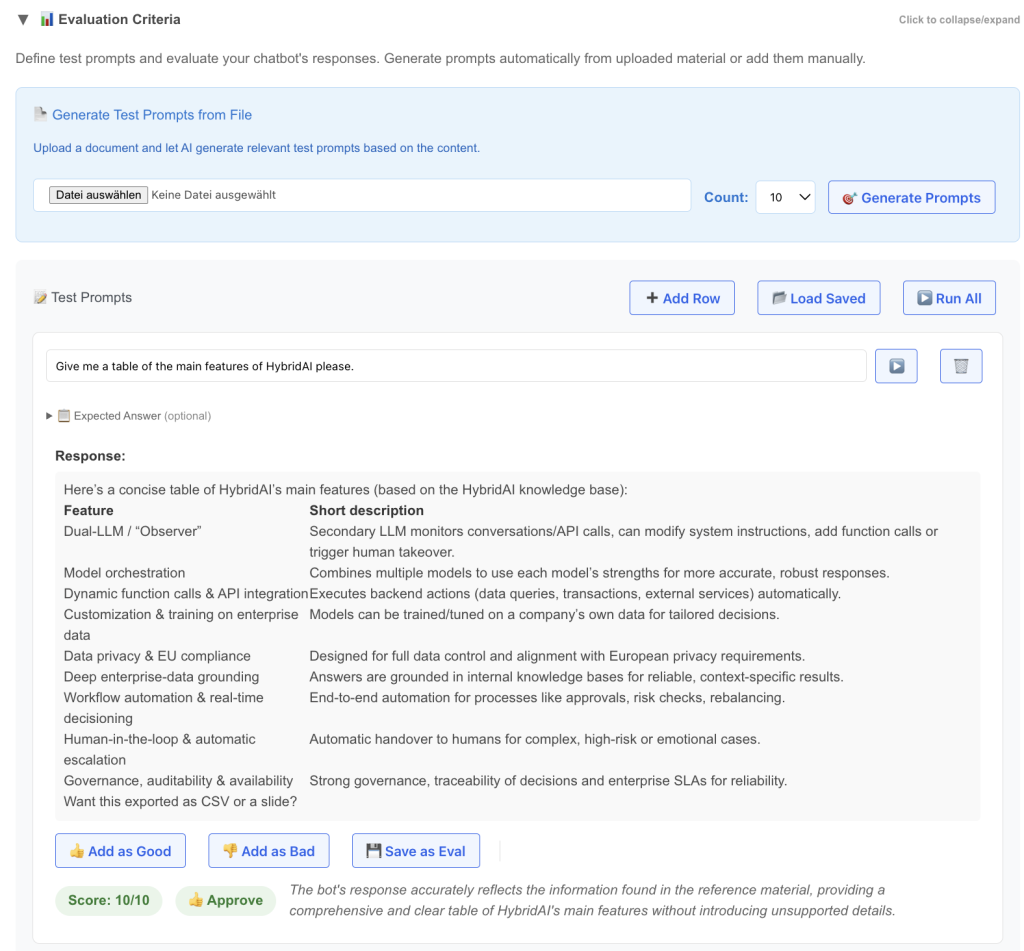

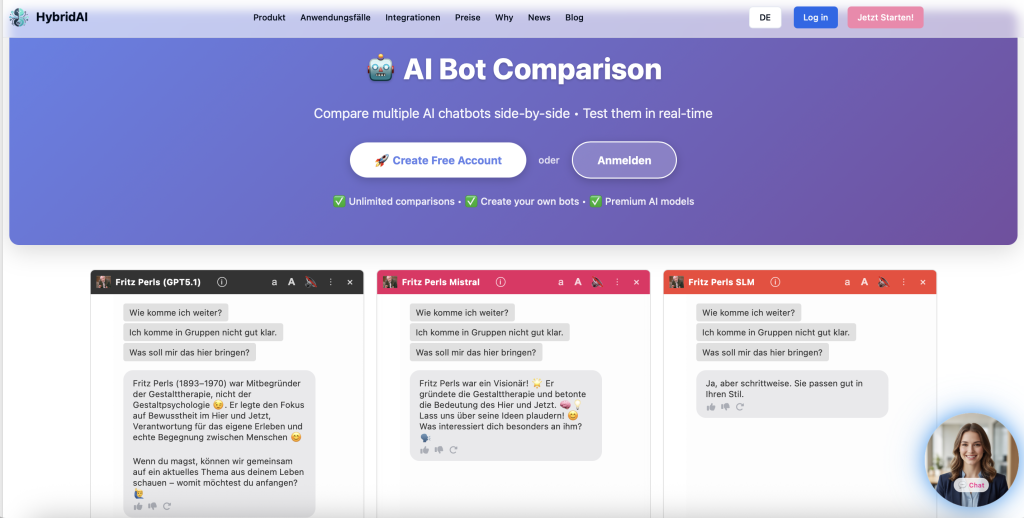

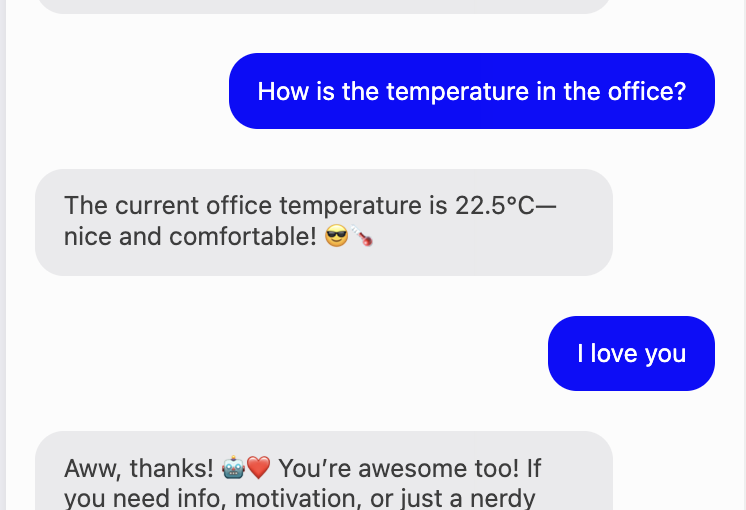

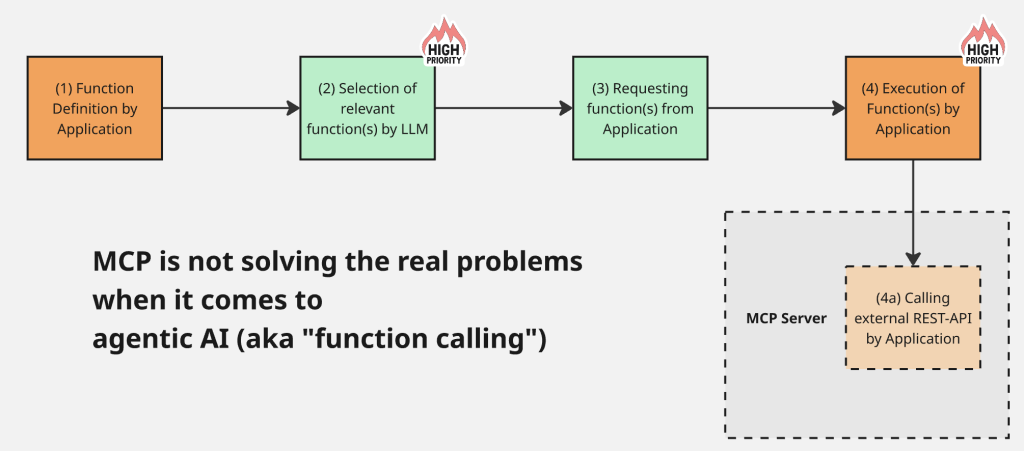

HybridClaw runs as a Node.js gateway with Docker-sandboxed tool execution. RAG-powered retrieval with document-grounded responses. Structured audit trails with hash-chain verification. Bundled office skills — PDF, XLSX, DOCX, PPTX — that handle the kind of document workflows enterprises actually need, not just chat. MCP integration for extensibility. Local model support via LM Studio, Ollama, or vLLM for air-gapped deployments. A built-in admin console with dashboard, session management, model configuration, and audit views.

What we think makes the difference: HybridClaw treats security and compliance as first-class architectural decisions, not afterthoughts bolted on top. Container isolation by default. Credentials separated from config. An onboarding flow that requires explicit trust model acceptance before anything runs. And all of it in TypeScript — which means your team can actually audit, extend, and maintain it without hiring Zig or Rust specialists.

Is it the smallest? No, that’s NullClaw. The most channels? No, OpenFang. The most autonomous? Hermes has that covered. But for European enterprises that need a production-ready agent with real security, document workflows, and a codebase their existing team can own — that’s the gap we built for.

The Takeaway

The “claw-like agent” space in 2026 is no longer a one-horse race. OpenClaw set the template, but the next generation is fragmenting along clear lines: minimalism (NanoClaw, NullClaw), security-first (OpenFang, HybridClaw), regional fit (CoPaw), and self-improvement (Hermes). The right choice depends on your constraints — not on who shipped first.

Pick the tool that matches your actual deployment reality, not the one with the longest feature list.